We’re familiar with the value of digital analytics to understand how users interact with websites and digital services, but the data can also produce practical operational information which would be hard to get any other way.

We added Google Analytics custom tracking to the alerts and error messages shown to users in GOV.UK when they search for a local authority service. The aim was to be able to spot friction points in the journey and improve the user experience.

But there was a surprise in store - the alerts also give very specific insights into problems with the underlying data which we would not have picked up from other sources. Now we can prioritise maintenance of the data according to use. The data helps us improve the quality of service for more people at less cost.

Background

There are pages on GOV.UK where you can use your postcode to look up the right place to find out about services offered by your local authority, for example: paying your Council Tax. The postcode is used to perform a lookup in a database of local authorities and services. The result should be a link to the specific page on the authority's website. If there's no link data, the system defaults to the authority's home page.

Postcode searches can cause usability problems. Even if the site deals with whether the code contains a space or not and auto-corrects common mistakes such as 'o' for '0' (GOV.UK does both), there will still be failures.

In this case we identified four types of failure:

- The postcode has been entered in an incorrect format which has got past the autocorrection

- The format is correct but that postcode is not in the database (perhaps it's a new address)

- The postcode has been verified but cannot be matched to a local authority in the database

- The postcode has been matched to a local authority, but there is no link to the relevant service in the database

The first two alerts trigger the same error message, the other two produce different messages. But since none of these failures involve loading a new page they are not visible in Google Analytics (GA) using basic pageview tracking.

So we had no idea how many people were having problems, let alone which types of look-up failures were causing most problems.

What we did

We added GA Event Tracking to record which of the four failure types is involved each time a user sees an alert.

- We use 'Event Category' to identify that these are user alerts within a specific section of the site.

- The 'Event Action' records which of the four error states has caused the alert.

- The actual message text shown is recorded in the 'Event Label' so that we can experiment with different wording.

GA automatically stores the page on which the event was sent so we do not need to explicitly track that.

What we found out

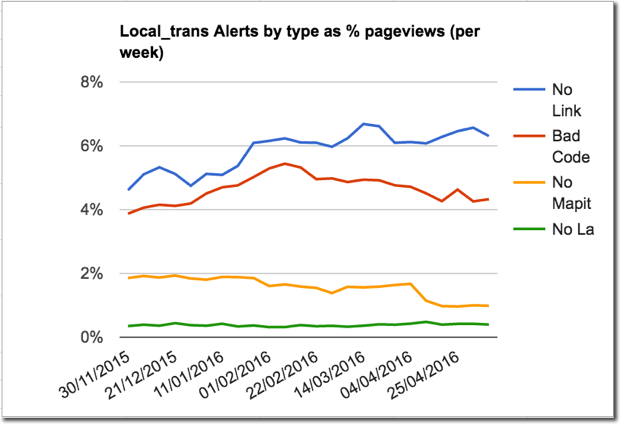

As soon as the tracking went live we got our first insights. For the first time we had data to show what was causing most trouble for users.

We had wondered if the quality of the postcode data would be a major issue, because we knew the database was out of date and deserved attention. User feedback showed plenty of complaints about the system not being able to find postcodes for new buildings. But that turned out not to be a problem affecting many users. (Useful reminder: always cross-check feedback complaints with other data to confirm how many people might be affected.)

Far more people were having problems because what they entered was not a valid postcode. The site auto-corrects predictable mistakes and does not care whether spaces are entered or not. So our hypothesis is that people are entering the names of towns, local authorities or addresses. We don’t have data on this because we deliberately do not record the postcodes in GA to respect people’s privacy.

But the most interesting and practical insight was that the biggest failure was people looking up a service but only getting a link to the council home page. That is caused by the database having no link data for the specific service. The links database is maintained by the local authorities via an old admin system which has not been developed for some time. The system is hard to use and the administrators have many other responsibilities. About 6% of service search page views were producing a ‘missing link, default to the council home page’ result.

What we did with what we found out

This new data drove action at a macro, tactical, level and at a micro, operational, level.

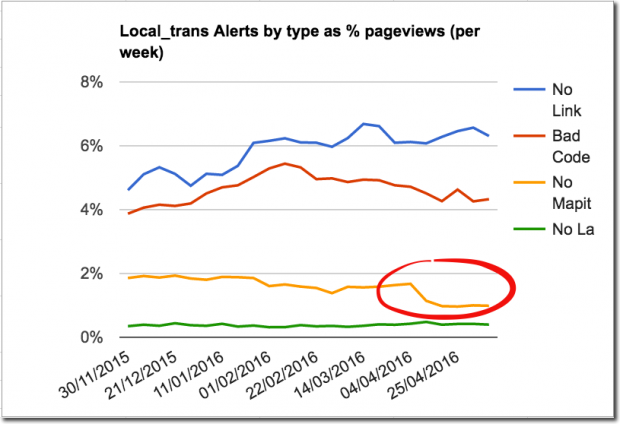

Evidence that the quality of link data was the biggest problem was used to shape our tactics for improving the quality of the system. Work was already in the pipeline to move the database and admin interface to GDS platforms to reduce costs. The new data made it clear that we could make a big improvement to user experience if we could improve the quality of the link data. So the roadmap was iterated to not only provide a new admin interface, but also to take responsibility for maintaining some of the links. We've written a separate post about how we're changing the way we manage links to local council websites. Meanwhile, data on which links were most popular is being used to focus the system on the most in demand local authority service links - which helps us reduce costs.

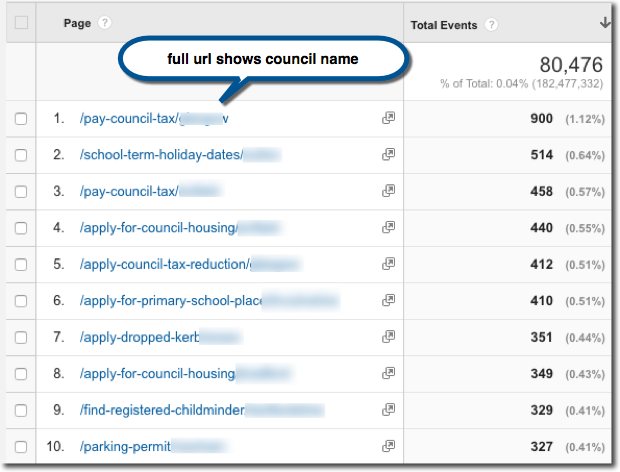

At an operational level, maintaining those links will also be more efficient as a direct result of this tracking work. The surprise* bonus which immediately jumped out of the first look at the reports from this tracking was that the 'event page' data showed which service / local authority combinations were triggering most missing link errors. So we can see which ones need most urgent attention based on current data. Searches are affected by seasonal and geographic variations (for example, school closures because of bad weather), so this specific information can allow one person to react quickly where it will make most difference to users. The data makes it practical to maintain a quality service for what users actually need in a very simple way.

Finally, although the out of date postcode data turned out to only affect about 2% of searches we upgraded both the postcode data and the system which we use to manage it, which halved the error rate and will make maintaining the data easier in the future. There's a separate detailed post about the work to improve postcode search on GOV.UK.

* The valuable bonus information came as a nice surprise because it was contained in data which we did not have to explicitly track. It was not part of the specification for the work. And that’s my excuse for not anticipating how useful it would turn out to be. It’s a great example of the GDS mantra “show the thing”. The real value became obvious when we saw the actual data.

Tim Leighton-Boyce is a Senior Performance Analysis at GOV.UK, follow him on Twitter: @timlb

3 comments

Comment by Bradley Robert Muir posted on

So, I've finally managed to get my DOB amended at HMRC, as whoever input my data onto the system in the first place got that wrong!! I couldn't get past the 'first name, last name, NIN and date of birth' stage of the recognition process. Now the online option (via a Government Gateway account), recognizes my DOB, and I thought "Eureka, I'm in!!"... but I can't for the life of me get the system to recognize my address. Neither by selecting the correct option that DOES come up automatically using my postcode, nor by filling in my address manually in all its possible permutations. It states it uses a credit reference agency to corroborate the data so I've tried typing it in as it comes on my bank statements, payslips, HMRC correspondence and all other logical permutations or combinations... but I STILL can't get online to do what I need to do.

Futility to the nth degree.

Comment by Andrew Robertson posted on

Thanks for sharing those improvements. There will be some services where a postcode just isn't known, for example to report litter or fly-tipping if you're travelling and spot it. In those instances I would probably want to click on a map to report it. I'm aware there are third-party apps/sites that perhaps do that such as fixmystreet.com

Comment by Tim Leighton-Boyce posted on

Hi Andrew, thanks for commenting. You're right of course: postcode search isn't appropriate for some of the services and that comes across in the feedback. When this first round of improvements is completed we're hoping to iterate again. And I expect we'll use data to guide that decision...