Digital analytics is a fast-moving field, and staying on top of what constitutes best practice takes effort. The product analyst team in GDS uses analytics to understand how users interact with GOV.UK. We need to stay up to speed with the latest techniques and tools, as well as making time to share what we've learned.

Sometimes that's through making contributions to other blogs, such as the post my colleague Ash Chohan was asked to write about analytics reporting with Google Apps Script. But nothing really matches face-to-face meetings with colleagues working on analytics challenges across different sectors. Listening to presentations and talking to industry colleagues in the tea breaks of conferences and events, I’m constantly reminded that there are common problems of measurement and attribution whether you’re working in private or voluntary sectors or in the public sector. Although our outcomes and budgets may differ, the same themes are regularly cropping up in conversation.

Search

Google, the dominant search engine, recently made big changes that have impacted on the analytics community. In September, Google stopped providing data on most people’s searches. Search term analysis is a very important tool for defining user needs and designing content based on the language users actually adopt online. Losing that information is quite a blow, but there are still some alternative data sources available.

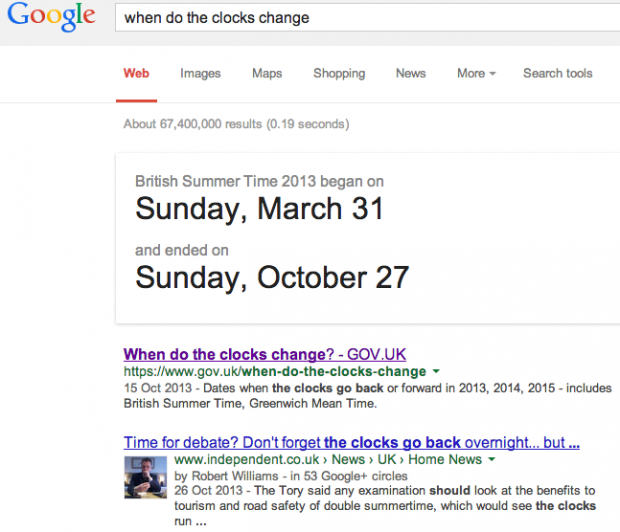

Google has also released the ‘Hummingbird’ update to its search algorithm. Hummingbird is increasingly exploiting structured data and the ‘knowledge graph’ to enhance search results. This presents opportunities for us in GOV.UK to use publisher tagging to reassure users about where content has come from. However, a search for ‘when do the clocks change’ on Google highlights a potential challenge for the future - the top result comes from Google itself. We're likely to see more government information directly available through search result pages or on third party sites. How do we measure the success of our efforts when our content is consumed outside our domain?

Attribution

Attribution is a growing theme. Most analytics tools are session-based; the tool knows a specific anonymous user used a site on a certain device and browser. But we know people use different devices at different times to visit a site. We also know that sessions sometimes time out.

How do we track user journeys to get that data without being intrusive? Similarly, how do we attribute the success of different marketing channels in getting people to a desired goal? Many campaigns have various online and offline components and some thought needs to go into what is measurable and how much each touchpoint (such as a poster or a display ad) contributed to the desired outcome.

Conversion optimisation

Conversion to a goal (be it making a sale, signing up to a newsletter, or completion of an application for a benefit such as Carer’s Allowance) is a vital metric to most organisations. One of the challenges for a digital analyst is to find the stories in a sea of data. A number of recent speakers have argued for a framework for optimisation to provide a clear way of working with user researchers and product managers. Frameworks can help capture user needs and business goals, develop hypotheses, shape experiments and measure the results.

Tricks of the trade

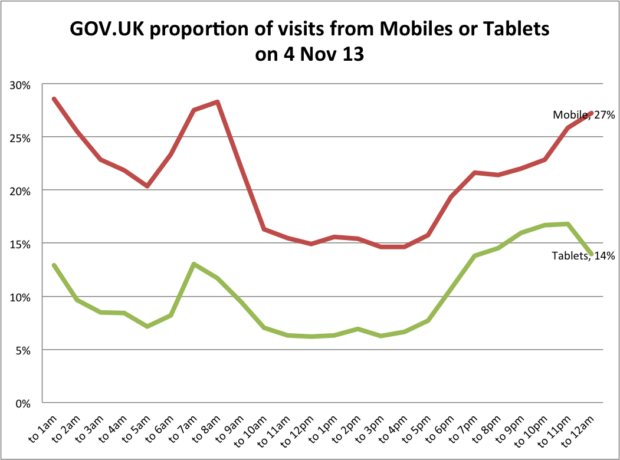

Some speakers are generous not only with their ideas, but also sharing their methods for using tools more effectively. A key reminder for us was to segment data to better understand what is going on. For example, the graph below segments visits by mobile and tablet on GOV.UK. It shows that mobile devices are used more in the morning and evening. Our next step will be to investigate what these morning and evening mobile visitors are doing on GOV.UK.

In the spirit of sharing, here's a little Excel trick I learned on the conference trail. Don’t use ‘merge and center cells’, use ‘center across selection’ instead. It’s a bit more fiddly to implement, but doesn’t do all the nasty merge things that you get when you're trying to copy or reformat.